It is April 2026, and the honeymoon phase of generative AI has officially ended. Despite a global investment surge reaching $40 billion, a recent MIT Sloan and BCG study reveals a staggering reality: 95% of generative AI projects fail to deliver measurable financial returns. This value gap isn't a failure of large language models (LLMs) themselves, but a failure of implementation. Most organizations treat AI as a sophisticated search engine or a faster typewriter. When used without a rigorous structural framework, GPT-4.1 hits a ceiling of 39% accuracy. To bridge this gap, engineers must stop 'prompting' and start architecting cognitive workflows.

The shift from simple automation to strategic partnership requires a fundamental change in how we view generative AI use cases. We are no longer in the era of 'write me a blog post' or 'fix this bug.' Today, 41% of global code is AI-assisted, and Claude Code agents now account for 4% of all GitHub commits. However, the developers seeing the most significant gains—saving an average of 3.6 hours weekly—are those who apply an engineering mindset to the interaction itself. This guide breaks down the transition from unstructured output to high-fidelity strategic problem-solving.

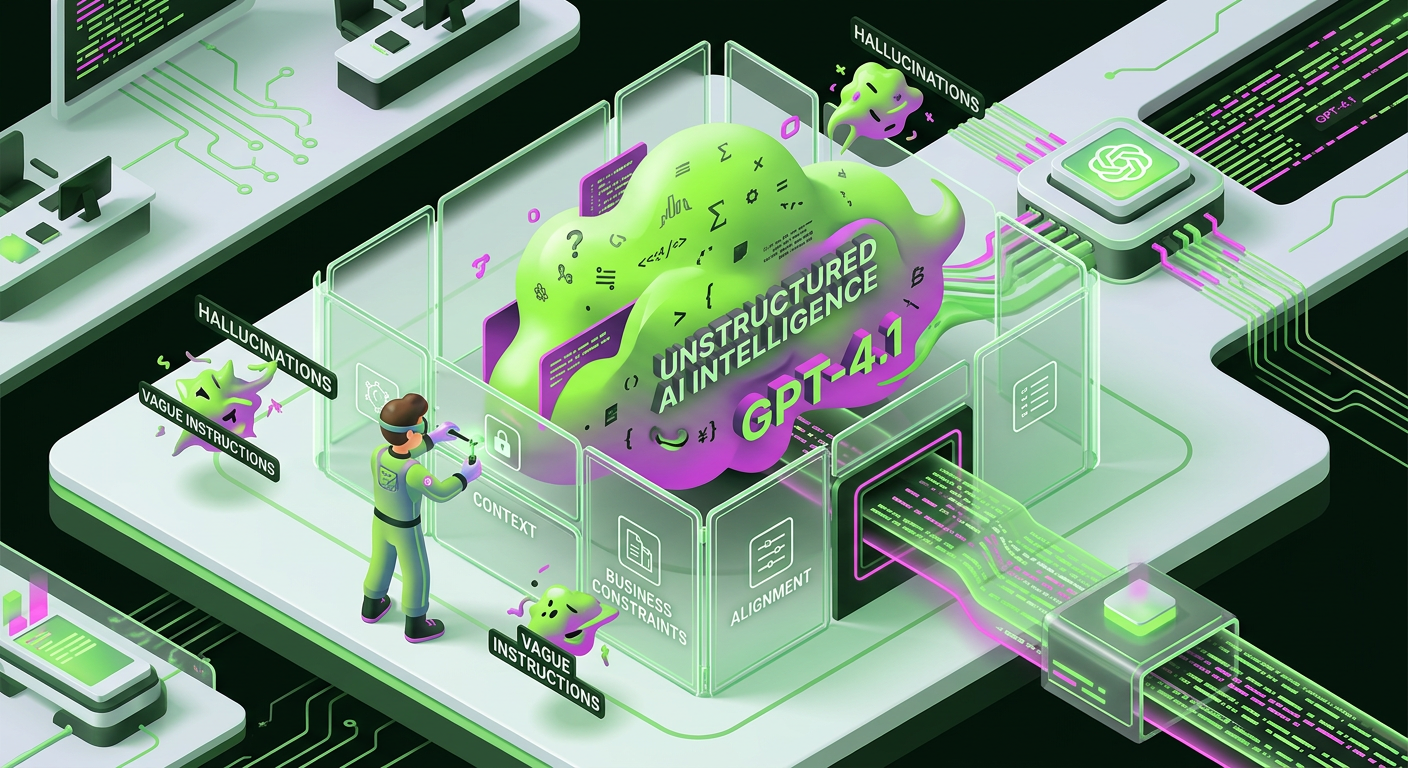

The 39% Accuracy Trap: Why Structure Dictates Outcome

Raw intelligence in a model like GPT-4.1 is useless without a container. When an engineer provides a vague instruction, the model hallucinates context to fill the void. This 'unstructured' approach is the primary reason for the 39% accuracy rate observed in complex reasoning tasks. The model isn't failing to think; it's failing to align with the invisible constraints of your specific business environment. To solve this, we must adopt what João Bento, CEO of CTT and an early AI doctorate holder, describes as the 'engineer's way of looking at problems.' This involves a balance of creative freedom and rigid structural logic.

Strategic generative AI use cases require a departure from the 'black box' mentality. You cannot simply input a problem and expect a finished solution. Instead, the AI must be treated as a junior partner that requires a comprehensive manual. By providing a logical scaffolding, you reduce the cognitive load on the model, allowing it to focus its parameters on the specific logic of the problem rather than guessing the context. This structural discipline is what separates a failed pilot project from a production-ready AI integration that actually moves the needle on ROI.

Step 1: Precision Problem Definition and Objective Mapping

Most generative AI use cases fail at the very first sentence. A generic prompt like 'optimize our supply chain' is a recipe for a 404 in logic. Precision is the only currency that matters. You must define the problem with the same granularity you would use for a Jira ticket or a technical specification document. This means identifying the specific metric you intend to move, the timeframe for the intervention, and the exact stakeholders involved.

Consider a scenario where you are using AI to redesign a legacy system. Instead of asking for 'modernization tips,' define the task as: 'Analyze this monolithic Java architecture to identify three candidates for microservices transition, prioritizing services with the highest database contention.' This level of detail forces the AI to bypass generic advice and engage with the structural reality of your request. It transforms the AI from a generalist into a specialized consultant. Without this precision, you are essentially asking a GPS for 'directions' without providing a destination.

Step 2: Establishing Environmental Constraints and Non-Negotiables

Generative AI operates in a vacuum unless you provide the walls. In a corporate setting, a solution that is technically brilliant but violates regulatory compliance is a failure. Every strategic use case must be wrapped in a layer of constraints. These include your current tech stack, budget limitations, security protocols, and even cultural nuances of the end-user. If the AI doesn't know you are restricted to AWS GovCloud, it might suggest a suite of tools that are prohibited in your environment.

To implement this, create a 'Constraint Manifest' for your AI interactions. List your mandatory technologies (e.g., 'Must use PostgreSQL 15+'), your performance benchmarks (e.g., 'API latency must remain under 200ms'), and your 'never' list (e.g., 'Do not use third-party libraries without an MIT license'). By front-loading these limitations, you prevent the AI from wasting tokens on unviable paths. This is particularly critical in 2026, where the complexity of hybrid-cloud environments makes 'hallucinated' architectural suggestions a major security risk.

Step 3: The Plan Mode Revolution and CLAUDE.md Integration

The introduction of 'Plan Mode' in agents like Claude Code has fundamentally changed the workflow of high-performing engineering teams. Unlike standard chat interfaces, Plan Mode allows the AI to ingest an entire codebase or project directory, propose a multi-step execution strategy, and wait for human validation before touching a single file. This is the practical application of the 'think before you act' principle. To maximize this, engineers are now using CLAUDE.md files—a persistent 'instruction manual' located at the root of the project.

A CLAUDE.md file should contain your architectural preferences, naming conventions, and strategic priorities. For example, you might specify that all state management must use Signals instead of Redux, or that every function must include a specific type of error handling. When the AI enters Plan Mode, it reads this file first. This ensures that every generative AI use case handled by the agent is automatically aligned with your team's standards. It effectively 'downloads' your senior architect's brain into the AI's working memory, ensuring consistency across thousands of lines of generated code or pages of strategic planning.

Step 4: Decomposing Monoliths into Cognitive Sub-Tasks

Even the most advanced models in 2026 struggle with 'long-horizon' reasoning—tasks that require 50+ steps to complete. The most successful generative AI use cases involve breaking a massive strategic goal into a series of interconnected sub-tasks. This is the 'Divide and Conquer' algorithm applied to human-AI collaboration. If you are using AI to plan a market entry strategy, do not ask for the whole plan at once. Break it down into market analysis, competitor benchmarking, regulatory mapping, and finally, the execution roadmap.

For each sub-task, the AI should produce a discrete output that you can verify. This iterative verification prevents 'error compounding,' where a small mistake in step one leads to a total hallucination by step ten. By reviewing the AI's work at each milestone, you maintain control over the strategic direction. This approach also allows you to swap models for different tasks—perhaps using a high-reasoning model for the initial architecture and a faster, cheaper model for the boilerplate documentation. This modularity is key to managing the high costs associated with enterprise-grade AI.

Step 5: The Feedback Loop and Iterative Refinement

Strategic AI use is not a 'one-and-done' transaction. It is a conversation. The first output from an AI is rarely the final product; it is a draft that requires 'tuning.' In 2026, the most effective engineers spend more time refining the AI's logic than they do writing original text. This involves pointing out logical inconsistencies, asking the AI to 'red-team' its own proposal, or providing new data points that were previously unavailable.

When the AI provides a plan, ask it: 'What are the three biggest risks in this approach?' or 'How would this scale if our user base tripled overnight?' This forces the model to re-evaluate its parameters and often reveals hidden flaws in the initial logic. This iterative process is where the real value is created. It moves the interaction from a simple command-response to a deep analytical session. Remember, the goal is to use the AI to expand your own cognitive capacity, not to replace it. The final decision always rests with the human engineer who understands the 'why' behind the 'what.'

Avoiding the 'Shiny Object' Syndrome in 2026

With new models being released almost monthly, it is easy to fall into the trap of constantly switching tools. However, the most successful generative AI use cases are built on stable, well-understood workflows. A team that has mastered the use of CLAUDE.md and Plan Mode on an older model will consistently outperform a team using the latest 'bleeding edge' model without any structure. Focus on the framework, not the tool.

Another common pitfall is the 'automation paradox.' As AI handles more of the 'how,' humans must become better at the 'what' and 'why.' If your engineering team is saving 3.6 hours a week, that time must be reinvested into high-level architectural thinking and cross-functional strategy. If that time is simply absorbed into more meetings, the ROI of your AI investment will remain at zero. The 'engineer's mindset' is about efficiency, but it is also about impact. Use the reclaimed time to solve the problems that AI cannot—the ones that require empathy, complex stakeholder management, and long-term vision.

The Future of Engineering-Led AI Strategy

As we move toward 90% AI adoption in engineering teams by the end of 2025, the role of the developer is shifting toward that of a 'Cognitive Architect.' You are no longer just writing code; you are designing the systems that write code. This requires a deep understanding of symbolic logic, structural engineering, and the nuances of LLM behavior. The 95% failure rate in AI projects is a wake-up call. It proves that money and compute power are not enough. Success in the age of generative AI requires a return to the fundamentals of engineering: structure, discipline, and a relentless focus on the problem at hand. By building a robust cognitive framework, you ensure that your AI use cases deliver not just code, but lasting strategic value.