The mobile gaming sector is undergoing a structural shift in how user acquisition (UA) and content generation function. A quiet revolution, led by agile Chinese mobile game studios, is systematically replacing subscription-based "black-box" AI tools with open-source ComfyUI workflows. This pivot allows studios to achieve a tenfold increase in UA scale without adding headcount. The strategy succeeds because it treats AI not as a magic wand, but as a technical stack. By applying API design principles to AI content generation, developers are turning chaotic prompting into predictable, scalable industrial pipelines.

The Shift from Prompting to Workflow Engineering

The era of simple text prompting is dead. In 2026, mobile game developers have moved toward a "workflow engineering" paradigm. They treat AI content pipelines with the same rigor applied to backend software development. This involves constructing modular, reusable ComfyUI graphs that function like a microservices architecture. When you apply API design principles to a node-based graph, each node becomes a functional endpoint with strictly defined inputs, processing logic, and outputs.

This structured approach ensures consistency across thousands of creative variations. In traditional software, an API must be idempotent and predictable; in ComfyUI, a well-engineered workflow must produce consistent character features across different aspect ratios and lighting conditions. By moving away from the per-generation costs of proprietary services, studios iterate on concepts without financial friction. The use of open-source models like LTX-2.3 and FLUX.2 Klein allows for this level of control, ensuring that a hero character looks identical across five hundred different ad variations—a feat nearly impossible with randomized black-box prompting.

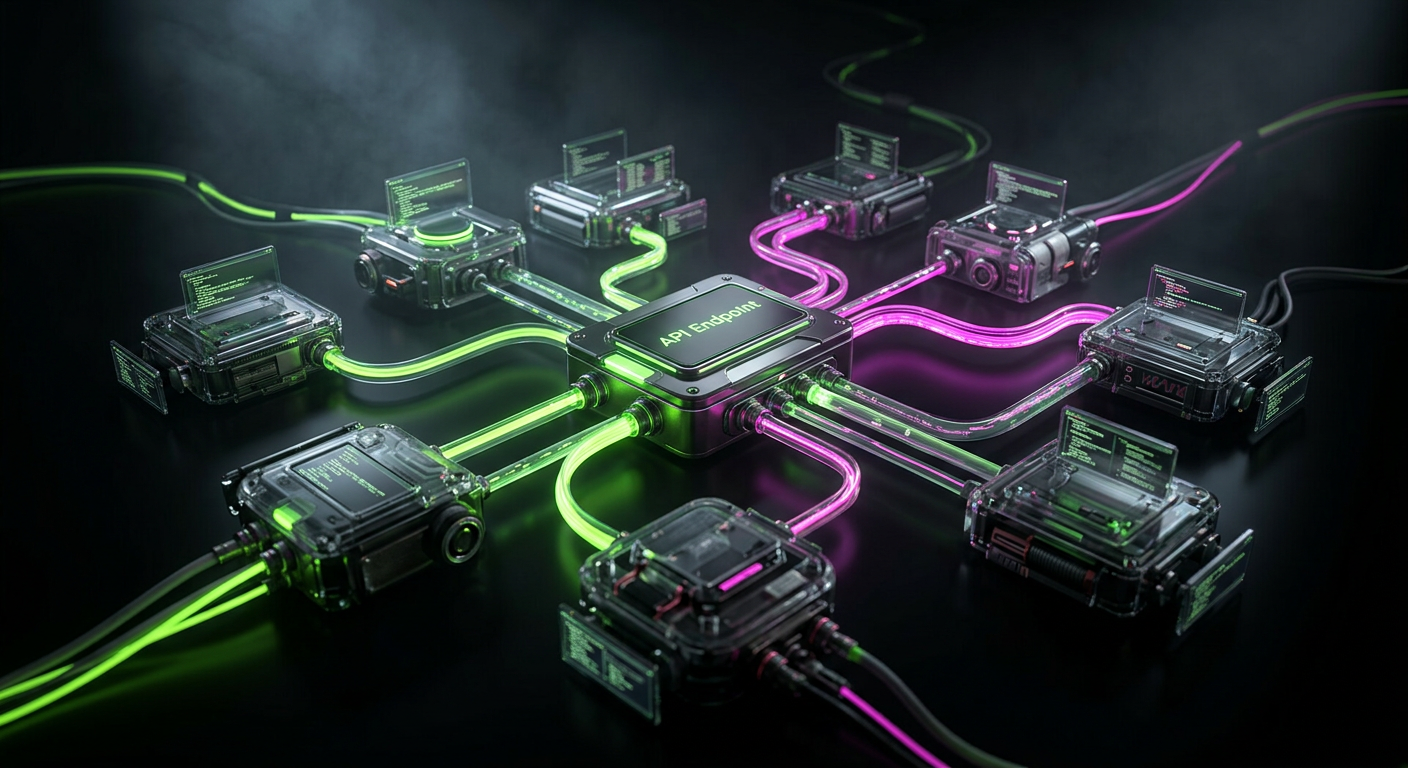

Applying API Design Principles to Node Architectures

To manage the complexity of modern AI pipelines, developers must treat their ComfyUI graphs as a collection of internal APIs. This means prioritizing modularity and separation of concerns. For instance, a workflow designed for a fantasy RPG should have a dedicated "Character Injection" module, an "Environment Context" module, and a "Post-Processing" module. Each of these acts as a microservice within the larger graph.

Effective API design principles dictate that these modules should be loosely coupled. If a studio decides to swap a Stable Diffusion XL backbone for a newer model, the "Environment Context" node should remain functional without a total system overhaul. Documentation within the graph—using notes and grouped nodes—serves as the API documentation for the creative team. This allows technical artists to update the "backend" logic of the workflow while UA managers interact only with the simplified "frontend" parameters. This separation of logic and presentation is what enables rapid scaling in high-pressure mobile gaming markets.

NVIDIA's Role in Accelerating Open-Source AI Workflows

NVIDIA has fundamentally changed the accessibility of these engineering-heavy workflows. As of March 2026, the introduction of "App View" for ComfyUI has bridged the gap between technical complexity and user experience. App View provides a panel-based interface that abstracts the underlying node spaghetti. It essentially acts as a UI layer for the underlying API, allowing non-technical creators to adjust sliders and toggles while the complex node logic remains hidden and protected from accidental changes.

Performance breakthroughs on the hardware side have made local execution the only logical choice for high-volume studios. Native support for NVFP4 and FP8 data formats within ComfyUI now delivers a 2.5x performance boost on NVIDIA RTX 50 Series GPUs. More importantly, these formats offer a 60% reduction in VRAM requirements. These optimizations build on a 40% speed increase observed since September 2025. For a studio running 24/7 generation cycles, these efficiency gains directly translate to lower overhead and faster time-to-market for new ad campaigns.

Hardware Requirements for 2026 Workflows

To run these advanced engineering pipelines, studios have standardized their hardware tiers. The minimum entry point is now an NVIDIA RTX 3060 with 12GB of VRAM and 16GB of RAM. However, for professional-grade production involving high-resolution video and complex ControlNet stacks, the industry standard has shifted to the NVIDIA RTX 4070 or 4080 with at least 16GB of VRAM and 32GB of system RAM. These specs ensure that the API design principles of low latency and high throughput are met at the local hardware level.

RTX Video Super Resolution (VSR) and Real-Time Upscaling

A critical component of the 2026 workflow is the integration of RTX Video Super Resolution (VSR) as a native ComfyUI node. VSR offers real-time 4K upscaling of AI-generated video, operating 30 times faster than traditional local upscalers. In the context of mobile UA, where video ads dominate platforms like TikTok and Meta, the ability to generate low-resolution drafts and upscale them instantly to high-fidelity assets is a massive competitive advantage.

This upscaling node functions as a final "optimization API" in the creative pipeline. It allows the initial generation to happen at lower resolutions—saving VRAM and time—before the VSR node handles the heavy lifting of visual refinement. This two-stage process mirrors how modern web applications serve optimized images: generate the core data efficiently, then apply a delivery-focused transformation at the edge. For mobile game studios, this means the difference between producing ten videos a day and producing three hundred.

The Practical Implications for Mobile Game Development

The integration of AI into the development cycle is no longer limited to marketing. Tools like Armory3D are now enabling full game development—including physics, lighting, and logic—directly within Blender using the Haxe language. This eliminates the traditional, time-consuming export cycles between 3D modeling software and game engines. When combined with ComfyUI, this creates a unified ecosystem where assets are generated, refined, and implemented in a single continuous loop.

The market data supports this aggressive adoption. By the end of 2026, projections indicate that 50% of all UA creatives will either feature AI hooks or be entirely AI-generated. This is a survival requirement for Free-to-Play (F2P) models, which accounted for over 70% of mobile game revenue in 2025. F2P games live and die by their unit economics—specifically the relationship between Lifetime Value (LTV) and Cost Per Install (CPI). By using API design principles to automate creative production, studios are effectively slashing their CPI by reducing the cost of content experimentation.

Context: The Evolution of AI in Creative Industries

We are witnessing a shift away from centralized, high-cost production houses. The winding down of Sony Pictures' Pixomondo on March 19, 2026, serves as a stark reminder that traditional VFX and virtual production models are struggling to compete with decentralized, AI-driven automation. The industry is moving toward leaner, more technical teams that can manage automated pipelines rather than large groups of manual artists.

The open-source community is the primary engine of this change. The "OpenClaw" ecosystem now features over 5,490 community-built skills for AI agents. These skills allow developers to add specific functionalities to their ComfyUI workflows—such as automated sentiment analysis for ad copy or real-time trend tracking—further extending the "API" capabilities of their creative stacks. This collaborative environment ensures that even small indie studios have access to the same level of technical sophistication as industry giants.

Challenges in Standardizing AI Workflows

Despite the rapid progress, several hurdles remain. The most significant gap is the lack of universal standards for API design principles within node-based environments. As workflows become more complex, the need for standardized versioning, documentation, and interoperability between different custom nodes becomes critical. Without these standards, studios risk creating "technical debt" in their AI pipelines, where a single update to a community node can break an entire production line.

Legal and copyright frameworks also remain in a state of flux. While the technical ability to use open-source datasets for commercial assets is clear, the legal status of training data in 2026 is still being debated in various jurisdictions. Furthermore, major platforms like Apple and Google are continuously updating their policies regarding the labeling of AI-generated content. Studios must remain agile, ensuring their engineered pipelines can adapt to new disclosure requirements without requiring a complete rebuild of their creative logic.

The Engineering Mindset for Future Growth

Success in the 2026 mobile gaming market requires a total embrace of the engineering mindset. It is no longer enough to have a creative team that knows how to use AI; you need a technical team that knows how to build AI systems. By applying API design principles to ComfyUI, studios transform a volatile creative process into a stable, scalable, and highly profitable engine. The future of mobile gaming belongs to those who treat their creative pipeline as a piece of high-performance software, optimized for speed, modularity, and relentless iteration.