The artificial intelligence landscape evolves rapidly. Engineers must make informed choices when harnessing large language models (LLMs). Selecting the right open source AI models dictates project success. It offers control and customization. This guide presents the premier open-source LLMs available in 2026. It helps you build with impact.

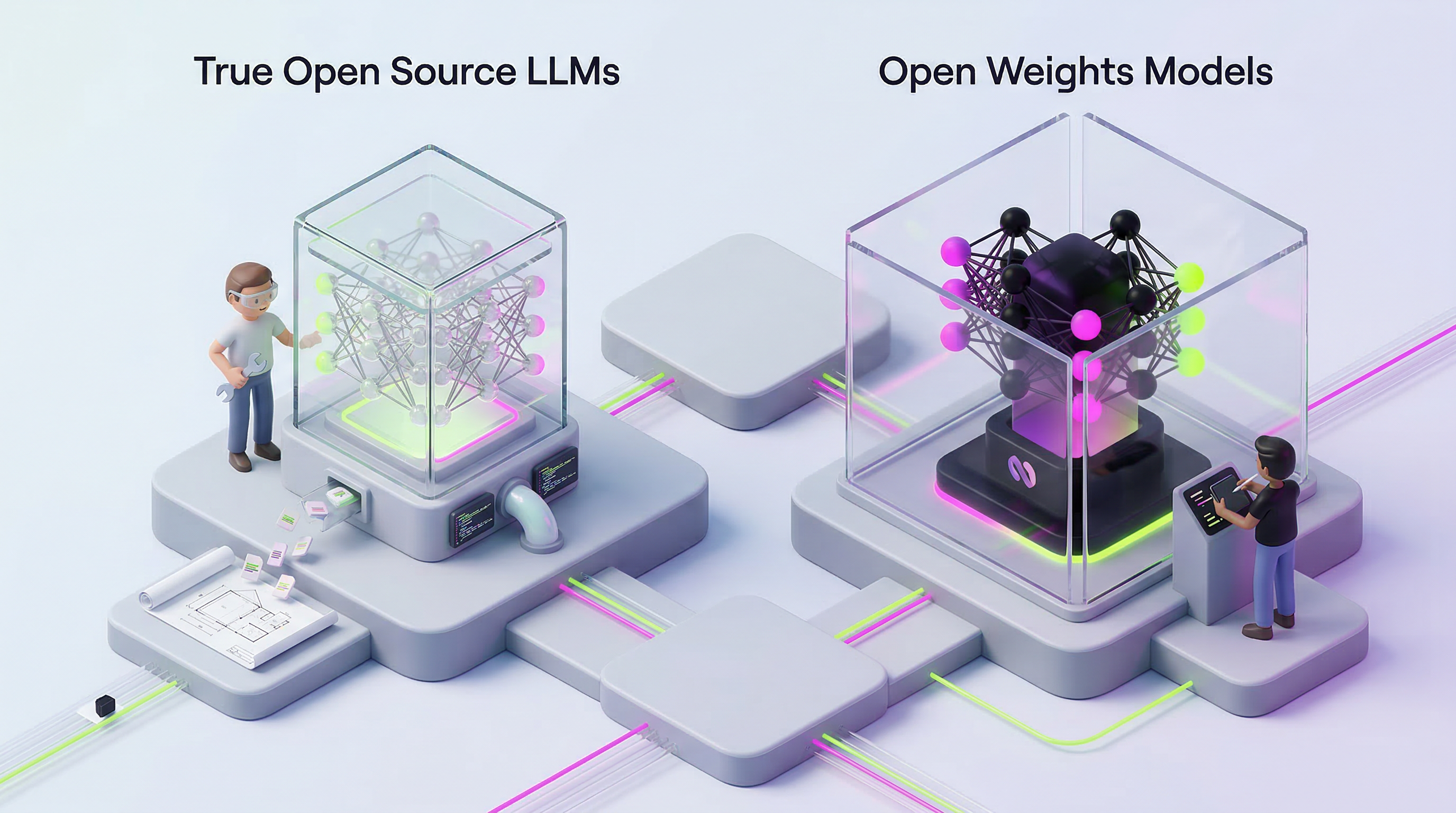

Open Source vs. Open Weights: A Key Distinction

Before exploring specific models, understand a fundamental distinction. True open-source LLMs adhere to the Open Source Initiative (OSI) definition. This means public architecture, code, and weights. It often includes training data. You gain full transparency and modification freedom.

Many powerful models are "open weights." Their parameters are public. This allows self-hosting and fine-tuning. Licensing might carry restrictions, such as commercial use limits or attribution requirements. For practical deployment, many teams treat these as functionally open-source. They prioritize the ability to run them on their own infrastructure. This distinction matters for long-term strategy and compliance.

S-Tier Open Source AI Models

Performance parity between open-source and proprietary AI models has largely closed. Today's S-Tier open-source models compete directly with leading commercial systems. These models excel across various benchmarks, from reasoning to coding. Choosing one requires aligning its strengths with your project's core needs.

Kimi K2.5 (Moonshot)

Kimi K2.5 stands out for its frontier-class capabilities. It features 1 trillion parameters and a 262K context window. It achieves near-perfect HumanEval scores (99.0). It also leads in MMLU (92.0), IFEval (94.0), MATH-500 (98.0), and GPQA Diamond (87.6). Its high Chatbot Arena rating (1447) confirms its robust conversational ability. This model is optimal for top-tier coding, complex mathematical problems, advanced reasoning, and strict instruction adherence across multiple domains.

GLM-4.7 (Zhipu AI)

GLM-4.7 offers a well-rounded profile. It has 355 billion parameters and a 200K context window. It boasts strong HumanEval (94.2), AIME 2025 (95.7), and GPQA Diamond (85.7) scores. Its LiveCodeBench performance (84.9) makes it a solid choice for coding agents and complex scientific Q&A. Consider GLM-4.7 for projects demanding strong reasoning and efficient code generation.

GLM-5 (Zhipu AI)

GLM-5, a larger sibling, packs 744 billion parameters within a 200K context window. It leads the Chatbot Arena with a 1454 rating. This indicates superior conversational quality. Its SWE-bench Verified score (77.8) and strong GPQA Diamond (86.0) highlight its depth in software engineering and scientific reasoning. GLM-5 is suitable for sophisticated conversational AI and large-scale software development tasks.

MiniMax M2.5 (MiniMax)

MiniMax M2.5 has 230 billion parameters and a 205K context window. It shines in real-world software engineering. It holds the highest SWE-bench Verified score (80.2). This makes it efficient for deployment in coding environments. This model is purpose-built for software engineering tasks, including code review and bug fixing. If your core problem involves code, M2.5 warrants consideration.

DeepSeek V3.2 (DeepSeek)

DeepSeek V3.2 offers 685 billion parameters and a 130K context window. It delivers strong MMLU-Pro (85.0), AIME 2025 (89.3), and GPQA Diamond (79.9) performance. Its Chatbot Arena rating (1423) is competitive. DeepSeek V3.2 is released under an MIT License. This provides maximum flexibility. It is ideal for general reasoning, agentic workflows, and teams prioritizing completely open licensing.

Step-3.5-Flash (Stepfun)

Step-3.5-Flash has 196 billion parameters and a 262K context window. It excels in specific coding and mathematical benchmarks. It achieves strong SWE-bench Verified (74.4), LiveCodeBench (86.4), and an AIME 2025 (97.3). For high-performance coding and advanced mathematical problem-solving, Step-3.5-Flash offers a compelling option.

Other Notable Open Source AI Models

S-Tier models represent the pinnacle. Other open-source options offer specialized advantages or different resource requirements. These models expand your toolkit for diverse engineering challenges.

Qwen3.5-397B-A17B (Alibaba)

Alibaba's Qwen3.5 is a multimodal powerhouse. This 397 billion parameter MoE model boasts an ultra-long 262K native context window. It is extendable to over 1 million tokens. Its decoding throughput is significantly higher—8.6x to 19x—than its predecessors. Qwen3.5 integrates vision and language early. This makes it strong across instruction following, reasoning, coding, agentic, and multilingual tasks (200+ languages). Long sequences can demand up to 1TB of GPU memory.

Nemotron Family (NVIDIA)

NVIDIA's Nemotron models are fully open-source. They provide open weights, training data, and recipes for building specialized AI agents. This family includes Nemotron Nano 30B, Nemotron Super 49B, and Nemotron Ultra 253B. Their comprehensive openness supports deep customization and agent development. This is a growing trend in enterprise software.

Llama 4 Maverick (Meta)

Meta's Llama 4 Maverick has 400 billion parameters and a 1 million token context. It signifies Meta's continued commitment to open innovation. Its massive context window positions it for tasks requiring extensive information processing and retention.

Mistral Large (Mistral)

Mistral Large, at 675 billion parameters and a 256K context window, offers another robust option. Mistral AI has consistently delivered high-performing models. This makes it a strong contender for general-purpose applications where performance and context are critical.

Practical Considerations for Open Source AI Models

Deploying open source AI models isn't always straightforward. Resource allocation is a common hurdle. High-parameter models, especially those with large context windows, demand significant GPU memory. Plan your infrastructure carefully. A model might be excellent on paper but impractical without the necessary hardware.

Another point of friction involves licensing. Always verify the specific license for each model. "Open weights" does not automatically mean unrestricted commercial use. Understand the terms to avoid future compliance issues. Community support also plays a role. Models with active communities often provide better documentation, faster bug fixes, and more shared resources for fine-tuning and deployment.

The Future of Open Source AI

The trajectory of open-source AI points towards increasingly sophisticated capabilities. We are seeing a shift from simple chatbots to autonomous AI agents performing multi-step tasks. Multimodal capabilities—integrating text, images, video, and audio—are becoming standard. "World models," designed to understand the physical world, are emerging. These have implications for robotics and scientific research. Competition is intense, with non-US labs often leading in specific niches. Expect specialized models to continue gaining ground, moving away from a "one-size-fits-all" approach. This dynamic environment means continuous learning and adaptation are paramount for engineers leveraging these powerful tools.