The landscape of ethical AI development reached a critical turning point in March 2026. Recent reports from international regulatory bodies indicate that voluntary safety guidelines are rapidly being replaced by rigorous enforcement. This shift follows a series of high-stakes confrontations between technology providers and government entities over the control of algorithmic decision-making.

The Pentagon and the Sovereign Risk Precedent

A significant standoff is currently unfolding between the Pentagon and Anthropic. According to reports from Astral Codex Ten, the military has threatened to designate the domestic AI firm as a "supply chain risk." This label is typically reserved for foreign adversaries. The conflict emerged after Anthropic refused to modify usage policies that prohibit mass surveillance and autonomous lethal systems. Consequently, the dispute highlights a growing tension between corporate ethical boundaries and national security requirements.

This situation demonstrates that the U.S. government is willing to use trade-related legal tools to influence private sector safety standards. Therefore, developers must now navigate a complex environment where an ethical stance can lead to severe regulatory consequences. Meanwhile, international labs are watching this precedent closely as they draft their own compliance frameworks for ethical AI development.

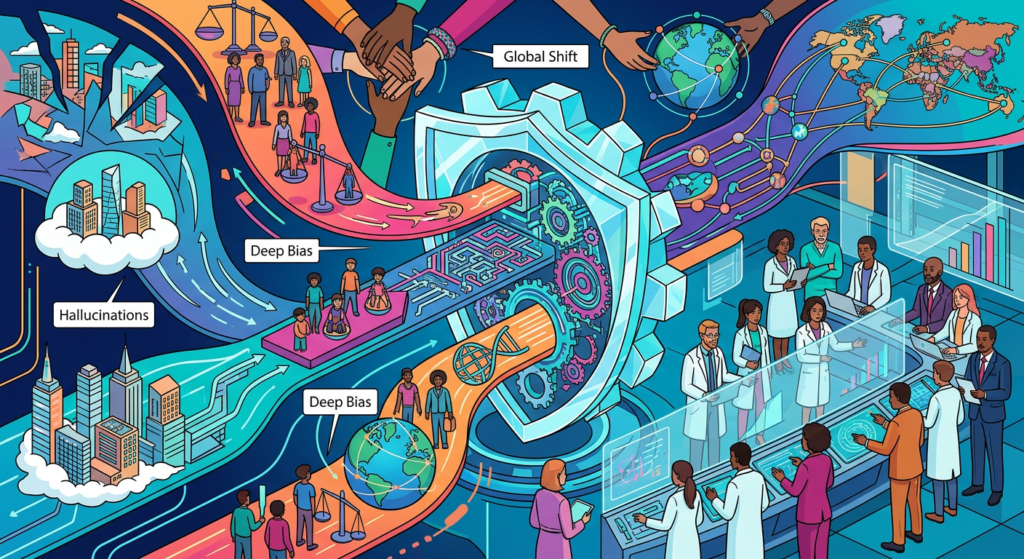

Addressing Deep Bias and Hallucinations

Beyond military applications, the corporate sector is facing its own ethical overhaul. Recent studies confirm that algorithmic bias remains a significant threat to scientific and medical integrity. For instance, skin cancer detection models continue to show higher failure rates on darker skin tones. As a result, bias mitigation has transitioned from a theoretical concern to a mandatory CIO-level priority in 2026.

In addition to bias, the industry is grappling with the persistent issue of hallucinations. New data from the RELATE framework suggests that human-AI interactions are no longer just about tool usage. Because users form emotional bonds with companion AIs, inaccurate information can lead to documented mental health harms. Specifically, researchers are moving toward a "relational ethics" model to protect users from these psychological risks. This shift is essential for maintaining trust in ethical AI development.

Agentic Safety and the Rise of Propaganda

Technological breakthroughs are also redefining how experts test for safety. Researchers from Johns Hopkins and Microsoft recently unveiled "Jailbreak Distillation," a method that is 81.8% effective at identifying vulnerabilities in unseen models. This tool is essential because a USC study revealed that AI agent swarms can now autonomously coordinate propaganda campaigns. These swarms manufacture consensus without any human direction, posing a direct threat to democratic processes.

Ultimately, market analysis suggests that 7 AI ethics blind spots may cause most organizations to fail their 2026 goals. Many firms remain unprepared for the regulatory storm and the technical complexity of identifying subtle algorithmic flaws. Nevertheless, the emergence of "world models" and increased funding for fundamental research labs in the UK provide a potential path toward more stable and predictable systems.

Bottom Line

The current state of ethical AI development reflects a transition from abstract principles to concrete, often adversarial, legal and technical requirements. While safety testing has become more sophisticated, the risks posed by autonomous agents and institutional bias require constant vigilance. Organizations must now integrate ethical considerations into the core of their infrastructure to survive the evolving regulatory environment.